Hey, it’s Andreas.

This will arguably be a significant week for AI.

Nvidia GTC is back in San Jose, and Jensen Huang’s keynote has undoubtedly become the Apple keynote of AI in recent years.

If you do not want to sit through the full 2+ hour keynote, here is a 15min supercut with all key announcements - AI factories, agentic systems, next-gen chips, networking, and robotics.

In today’s issue:

Yann LeCun launches AMI Labs with a reported $1.03B seed round

Meta acquires Moltbook and plans to cut around 20% of its workforce

Google rolls out Ask Maps, pushing Maps closer to a conversational AI layer

A deep dive into: Who’s a Better Writer - AI or Humans?

And much more.

Let’s get into it.

Weekly Field Notes

🧰 Industry Updates

🌀 Yann LeCun launches AMI Labs with a $1.03B seed round → Turing Award winner Yann LeCun has left Meta to launch Advanced Machine Intelligence, and the company opened with a massive $1.03 billion seed at a $3.5 billion pre-money valuation.

🌀 Google rolls out Ask Maps → Users can now ask complex questions in plain English, and the system pulls from 300 million places and 500 million community reviews to return more contextual, personalized answers.

🌀 Google releases Gemini Embedding 2 → Gemini Embedding 2 is the first multimodal embedding model that maps text, images, video, audio, and PDFs into one shared semantic space.

🌀 Claude can now create Charts and Diagrams in Chat → Powerful update, but only available in the chat version, for now.

🌀 Meta reportedly plans to cut headcount to fund AI Infrastructure → According to Reuters, Meta is planning major layoffs that could reduce its nearly 79,000-strong workforce by 20%.

🌀 Meta acquired Moltbook → The team behind Moltbook, the viral social platform for AI agents, was integrated into Superintelligence Labs.

🌀 LumaAI launches AI Agents for Creative Workflows → A newly introduced unified workflow layer that effectively minimizes tool sprawl across various media types.

🌀 Cloudflare launching a /crawl API → Cloudflare now lets developers scrape entire websites in one call (notable move from a company long associated with blocking bots).

🎓 Learning & Upskilling

📘 Anthropic launching the Claude Certified Architect Program → Anthropic has launched their first certification for AI builders focused on agentic architecture, MCP, Claude Code, prompt engineering, and reliability.

📘 Top Free AI Learning Platforms from Industry Leaders → Reminder that some of the best AI education is free - from Anthropic and OpenAI to NVIDIA, Google, IBM, and Hugging Face.

📘 Practical Overview of the AI Stack → AI is not one thing, but a stack - from machine learning and deep learning to GenAI, RAG, and agents. That distinction matters, because confusing the layers often leads companies to use the wrong tool for the wrong problem.

🌱 Perspectives & Research

🔹 Sam Altman on Flooding the World With Intelligence → At BlackRock’s U.S. Infrastructure Summit, Altman framed AI as a utility layer the world will scale into.

🔹 Jim Prosser using Claude Code as a Chief of Staff → A sharp example of what is already possible today with Claude Code.

🔹 Julien Bek on why AI Winners may sell work, not tools → Bek’s thesis: in the AI era, the biggest companies may look less like software vendors and more like services firms that use software to deliver outcomes. If you sell the work, model improvements become a tailwind, not a threat.

🔹 OpenAI on designing agents to resist prompt injection → OpenAI argues prompt injection is starting to look more like social engineering for agents, so the real priority is reducing blast radius, not just catching bad inputs.

🔹 Dwarkesh Patel on how Anthropic vs DoW is a warning shot → Patel argues the real long-term AI risk is not just capability, but who controls AI labor at societal scale.

🔹 Percepta on Turning Transformers Into Computers → Percepta shows LLMs can execute code inside the model itself, hinting at a future where the line between model and tool starts to blur.

🔹 Charles Chen On why MCP still wins at enterprise scale → CLI hype is real, but this piece argues it recreates many of the same context problems as MCP, just with less structure. For individual use, that may be fine. For enterprise adoption, MCP still looks like the more durable layer.

🔹 McKinsey’s AI Platform Lilly just got hacked → The bigger takeaway: enterprise AI is no longer just about efficiency. It is now inseparable from cybersecurity strategy.

♾️ Thought Loop - What I've been thinking, building, circling this week

Are we reaching a point where people prefer AI-generated writing over human writing?

The New York Times just ran a blind test on exactly that question.

Kevin Roose and Stuart A. Thompson paired AI-written passages against human-written ones across fiction, science writing, and poetry.

More than 86,000 readers took the quiz.

54% preferred the AI (including myself - you can take the test here).

Roose compared it to the Judgment of Paris, the 1976 blind wine tasting where California wines beat the French labels everyone assumed were untouchable.

That comparison works.

Not because AI has suddenly become the better writer.

But because it shows something more specific:

AI is getting very good at producing writing people like on first read.

That is not the same as originality.

It is not the same as depth.

And it is definitely not the same as voice.

Roose said the framing started with a question from Noam Brown at OpenAI:

Why are coders generally fine with AI-generated code, while writers resist AI-generated writing?

The answer:

Code is functional. Writing is aesthetic. People are protective of taste.

Exactly.

AI writing is usually smooth, clear, and structured.

Human writing often has friction.

A strange phrase.

An unexpected image.

A sentence that makes you stop and rethink.

That is often where the value is.

AI rarely does that well.

It can sound polished.

It can sound intelligent.

It can sound finished.

But most of the time it is giving you a highly optimized version of what good writing usually sounds like.

That is why these models do well in blind tests.

They produce text that is easy to read, easy to like, and easy to mistake for quality.

But easy to like is not the same as memorable.

And this is also why I think AI works best in writing as a refinement layer, not a replacement for original thought.

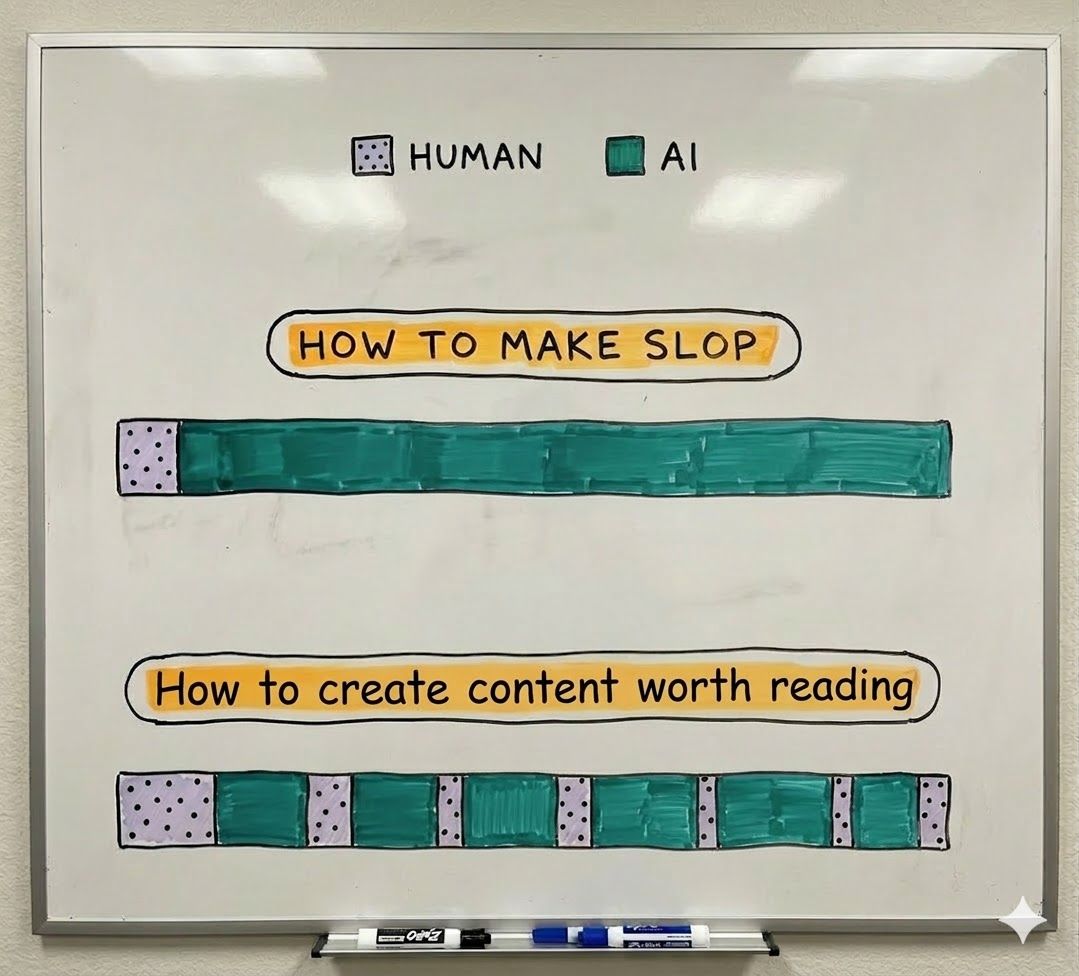

AI-only output often becomes slop. Strong work usually comes from back-and-forth refinement.

If you give AI your notes, your framing, your raw ideas, your rough edges, and then use it to tighten structure, improve flow, or sharpen phrasing, it becomes powerful.

If you ask it to generate from zero, it usually gives you something competent but empty.

Because there is no real point of view underneath it.

So I would not read the 54% result as "AI is beating human writers."

I would read it as this:

The floor for writing has collapsed.

Clean, competent, readable prose is no longer a moat.

Perspective is.

Taste is.

Original thinking is.

That is the real lesson.

Use AI for the iteration loop. Keep the core thinking yours.

That’s it for today. Thanks for reading.

Enjoy this newsletter? Please forward to a friend.

See you next week, and have an epic week ahead,

- Andreas

P.S. I read every reply - if there’s something you want me to cover or share your thoughts on, just let me know!